Software Is Changing (Again) – The Dawn of Software 3.0

When Andrej Karpathy titled his recent keynote “Software Is Changing (Again),” it wasn’t just a nice slogan. It marks what he argues is a fundamental shift in the way we build, think about, and interact with software. Based on his talk at AI Startup School (June 2025), here’s what “Software 3.0” means, why it matters, and how you can prepare.

What Is Changing: The Three Eras

Karpathy frames software evolution in three eras:

| Era | What it meant | Key Characteristics |

|---|

| Software 1.0 | Traditional code-first era: developers write explicit instructions, rules, algorithms. Think C++, Java, manual logic. | Highly deterministic, rule-based; heavy human specification; hard to scale certain tasks especially with unstructured or subtle data. |

| Software 2.0 | Rise of machine learning / neural nets: train models on data vs hand-coding every condition. The model “learns” patterns. | Better handling of unstructured data (images, text), but still needs labeled data, training, testing, deployment. Not always interpretable. |

| Software 3.0 | The new shift: large language models (LLMs) + natural language / prompt-driven interfaces become first-class means of programming. “English as code,” vibe coding, agents, prompt/context engineering. | You describe what you want; software (via LLMs) helps shape it. More autonomy, more natural interfaces. Faster prototyping. But also new risks (hallucinations, brittleness, security, lack of memory) and need for human oversight. |

Let’s break down what he means.

The “Old” World: Software 1.0

For the last 50+ years, we’ve lived in the era of what Karpathy calls “Software 1.0.” This is the software we all know and love (or love to hate). It’s built on a simple, deterministic principle:

- A human programmer writes explicit rules in a programming language like Python, C++, or Java.

- The computer compiles these rules into binary instructions.

- The CPU executes these instructions, producing a predictable output for a given input.

Think of a tax calculation function. The programmer defines the logic: if income > X, then tax = Y. It’s precise, debuggable, and entirely human-written. The programmer’s intellect is directly encoded into the logic. The problem? Its capabilities are limited by the programmer’s ability to foresee and explicitly code for every possible scenario. Teaching a computer to recognize a cat using Software 1.0 would require writing millions of lines of code describing edges, textures, and shapes—a nearly impossible task.

The Emerging World: Software 2.0

The new paradigm, “Software 2.0,” is a complete inversion of this process. Instead of writing the rules, we curate data and specify a goal.

- A human programmer (or, increasingly, an “AI Engineer”) gathers a large dataset (e.g., millions of images of cats and “not-cats”).

- They define a flexible, neural network architecture—a blank slate capable of learning complex patterns.

- They specify a goal or a “loss function” (e.g., “minimize the number of incorrect cat identifications”).

- Using massive computational power (GPUs/TPUs), an optimization algorithm (like backpropagation) searches the vast space of possible neural network configurations to find one that best maps the inputs to the desired outputs.

The “code” of Software 2.0 isn’t a set of human-readable if/else statements. It’s the learned weights and parameters of the neural network—a massive matrix of numbers that is completely inscrutable to a human. We didn’t write it; we grew it from data.

As Karpathy famously put it, think of the neural network as the source code, and the process of training as “compiling” the data into an executable model.

The Rise of the “AI Engineer” and the LLM Operating System: Software 3.0

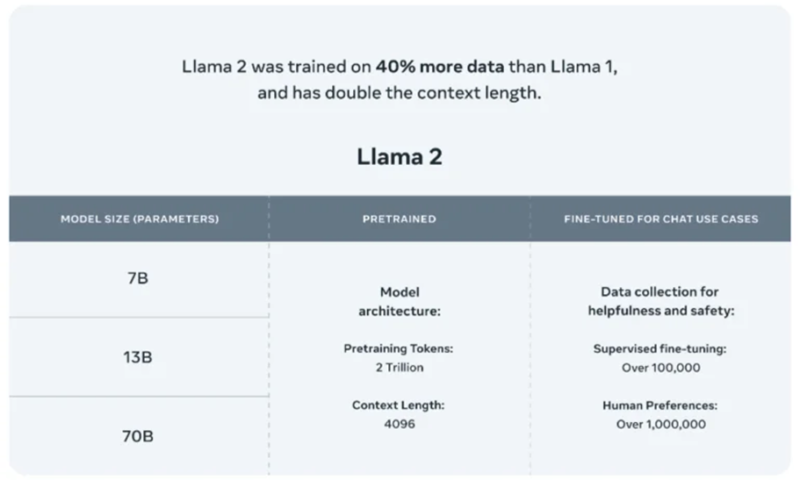

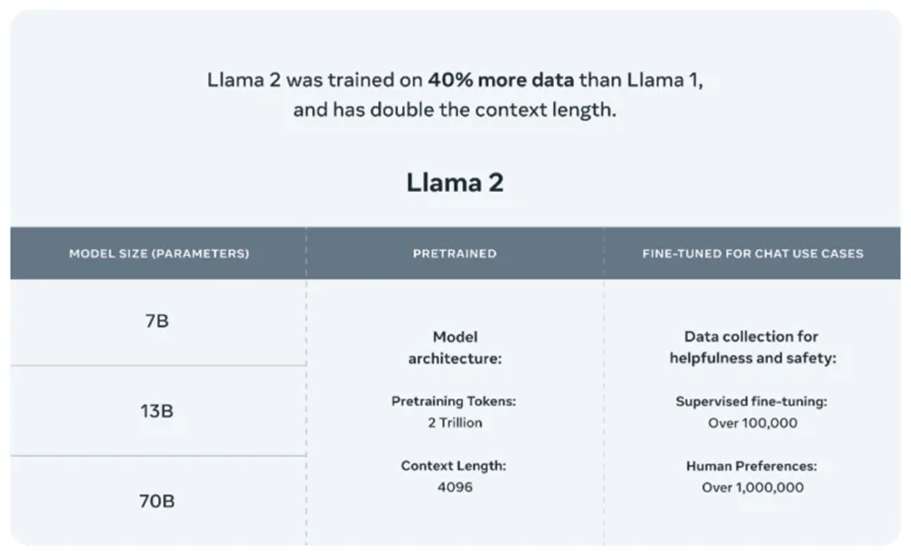

Karpathy’s most recent observations take this a step further with the explosion of Large Language Models (LLMs) like GPT-4. He describes the modern AI stack as a new kind of operating system.

In this analogy:

- The LLM is the CPU—the core processor, but for cognitive tasks.

- The context window is the RAM—the working memory.

- Prompting is the programming—the primary way we “instruct” this new computer.

- Tools and APIs (web search, code execution, calculators) are the peripherals and I/O.

This reframes the role of the “AI Engineer.” They are now orchestrating these powerful, pre-trained models, “programming” them through sophisticated prompting, retrieval-augmented generation (RAG), and fine-tuning to build complex applications. This is the practical, applied side of the Software 2.0 revolution that is currently creating a gold rush in the tech industry.

Core Themes from Karpathy’s Keynote

Here are some of the biggest insights:

- Natural Language as Programming Interface: Instead of writing verbose code, developers (and increasingly non-developers) can prompt LLMs in English (or human language) to generate code, UI, workflows. Karpathy demos “MenuGen,” a vibe-coding app prototype, as example of how quickly one can build via prompts.

- LLMs as the New Platform / OS: Karpathy likens current LLMs to utilities or operating systems: infrastructure layers that provide default capabilities and can be built upon. Labs like OpenAI, Anthropic become “model fabs” producing foundational layers; people will build on top of them.

- Vibe Coding & Prompt Engineering: He introduces / popularizes the idea of “vibe coding” — where the code itself feels less visible, you interact via prompts, edits, possibly via higher levels of abstraction. With that comes the need for better prompt or context engineering to reduce errors.

- Jagged Intelligence: LLMs are powerful in some domains, weak in others. They may hallucinate, err at basic math, or make logically inconsistent decisions. Part of working well with this new paradigm is designing for those imperfections. Human-in-the-loop, verification, testing.

- Building Infrastructure for Agents: Karpathy argues that software needs to be architected so LLMs / agents can interact with it, consume documentation, knowledge bases, have memory/context, manage feedback loops. Things like

llms.txt, agent-friendly docs, and file & knowledge storage that is easy for agents to read/interpret.

Final Thoughts: Is This Another Shift – Or the Same One Again?

Karpathy would say this is not just incremental – “Software is changing again” implies something qualitatively different. In many ways, Software 3.0 composes both the lessons of Software 1.0 (like performance, correctness, architectural rigor) and Software 2.0 (learning from data, dealing with unstructured inputs), but adds a layer where language, agents, and human-AI collaboration become central.

In a nutshell: we’re not just upgrading the tools; we’re redefining what software means.