The Powerhouses of Modern Computing: CPUs, GPUs, NPUs, and TPUs

The rapid advancement of technology has necessitated the development of specialized processors to handle increasingly complex computational tasks. This article delves into the core components of these processing units – CPUs, GPUs, NPUs, and TPUs – and their primary use cases.

Central Processing Unit (CPU)

The CPU, often referred to as the “brain” of a computer, is a versatile processor capable of handling a wide range of tasks. It excels in sequential operations, making it suitable for general-purpose computing.

- Key features: Sequential processing, efficient handling of complex instructions.

- Primary use cases: Operating systems, office applications, web browsing, and general-purpose computing.

Graphics Processing Unit (GPU)

Originally designed for rendering graphics, GPUs have evolved into powerful parallel processors capable of handling numerous calculations simultaneously.

- Key features: Parallel processing, massive number of cores, high computational power.

- Primary use cases: Machine learning, deep learning, scientific simulations, image and video processing, cryptocurrency mining, and gaming.

Neural Processing Unit (NPU)

Designed specifically for artificial intelligence workloads, NPUs are optimized for tasks like image recognition, natural language processing, and machine learning.

- Key features: Low power consumption, high efficiency for AI computations, specialized hardware accelerators.

- Primary use cases: Mobile and edge AI applications, computer vision, natural language processing, and other AI-intensive tasks.

Tensor Processing Unit (TPU)

Developed by Google, TPUs are custom-designed ASICs (Application-Specific Integrated Circuits) optimized for machine learning workloads, particularly those involving tensor operations.

- Key features: High performance, low power consumption, specialized for machine learning workloads.

- Primary use cases: Deep learning, machine learning research, and large-scale AI applications.

Other Specialized Processors

Beyond these core processors, several other specialized processors have emerged for specific tasks:

- Field-Programmable Gate Array (FPGA): Highly customizable hardware that can be reconfigured to perform various tasks. Ex: Signal processing

- DPU or Data Processing Unit, is a specialized processor designed to offload data-intensive tasks from the CPU. It’s particularly useful in data centers where it handles networking, storage, and security operations. By taking over these functions, the DPU frees up the CPU to focus on more complex computational tasks. Primary use-cases include Data center infrastructure, Security & Encryption tasks

- VPU or Vision Processing Unit, is specifically designed to accelerate computer vision tasks. It’s optimized for image and video processing, object detection, and other AI-related visual computations. VPUs are often found in devices like smartphones, AR/VR, surveillance cameras, and autonomous vehicles.

The Interplay of Processors

In many modern systems, these processors often work together. For instance, a laptop might use a CPU for general tasks, a GPU for graphics and some machine learning workloads, and an NPU for specific AI functions. This combination allows for optimal performance and efficiency.

The choice of processor depends on the specific application and workload. For computationally intensive tasks like machine learning and deep learning, GPUs and TPUs often provide significant performance advantages over CPUs. However, CPUs remain essential for general-purpose computing and managing system resources.

As technology continues to advance, we can expect even more specialized processors to emerge, tailored to specific computational challenges. This evolution will drive innovation and open up new possibilities in various fields.

In Summary:

- CPU is a general-purpose processor for a wide range of tasks.

- GPU is specialized for parallel computations, often used in graphics and machine learning.

- TPU is optimized for AI/ML operations.

- NPU is optimized for neural network operations.

- DPU is designed for data-intensive tasks in data centers.

- VPU is specialized for computer vision tasks.

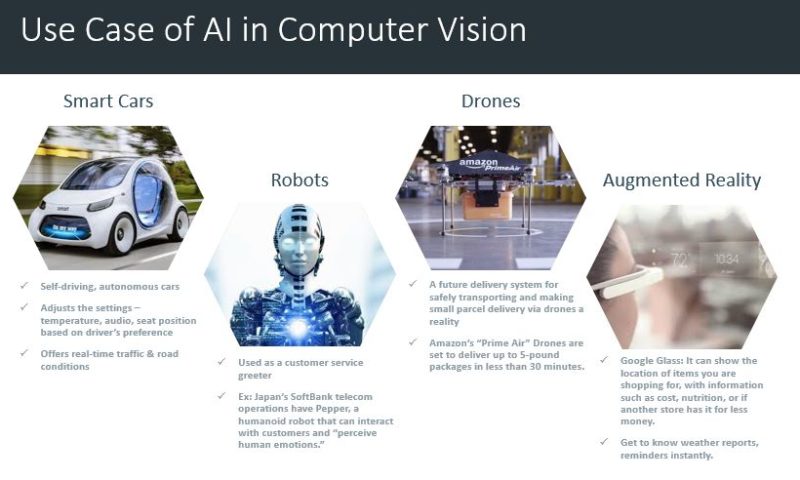

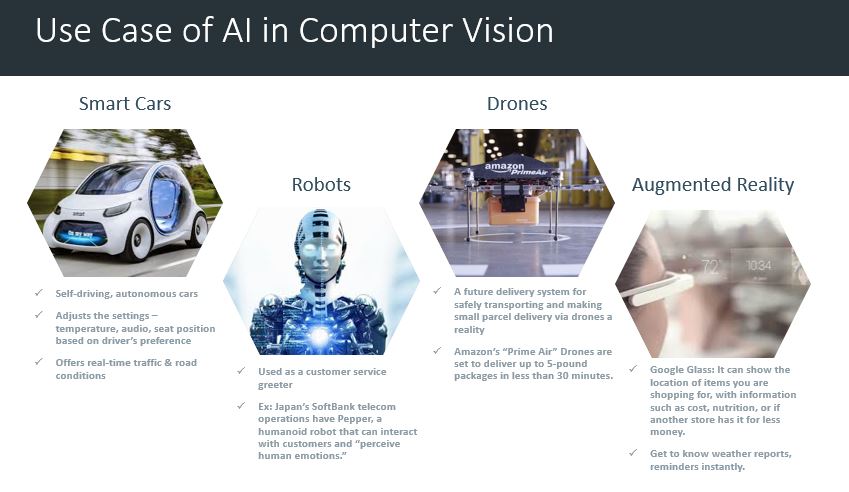

1. Computer Vision – Smart Cars (Autonomous Cars): IBM survey results say 74% expected that we would see smart cars on the road by 2025. It might adjust the internal settings — temperature, audio, seat position, etc. — automatically based on the driver, report and even fix problems itself, drive itself, and offer real time advice about traffic and road conditions.

1. Computer Vision – Smart Cars (Autonomous Cars): IBM survey results say 74% expected that we would see smart cars on the road by 2025. It might adjust the internal settings — temperature, audio, seat position, etc. — automatically based on the driver, report and even fix problems itself, drive itself, and offer real time advice about traffic and road conditions.